Generative AI and the Law

AI is here already – with the power to change the legal profession

Generative AI and large language models have the potential to transform the practice of law in many different ways. But if they are to be fully embraced, they must be accurate with reliable data to draw on.

The artificial intelligence tool ChatGPT has taken the world by storm since its November 2022 launch. But in many sectors of the economy, this was simply generative AI’s public debut. In the legal world, for example, law firms and others have been examining AI’s potential for a long time – putting it to practical and sometimes game-changing use (beyond asking ChatGPT to write courtroom speeches in the style of Beyoncé).

The potential applications for AI in the legal world are immense and include composing client briefs, producing complex analyses from troves of documents, and helping firms with limited resources compete with the largest groups. AI can help to conduct due diligence in corporate mergers and significantly aid legal education and knowledge acquisition in complex and fast-moving areas.

One high-profile demonstration that bolstered conversations of generative AI’s legal capabilities came when the latest iteration (GPT-4) recently passed the bar exam. But lawyers and law students are already significantly more aware than the general population of generative AI’s potential beyond such eye-catching stunts. According to the results of a survey released by LexisNexis in August 2023, about half of all lawyers believe generative AI tools will significantly transform the practice of law, and nearly all believe it will have at least some impact (92 percent). 77 percent believe generative AI tools will increase the efficiency of lawyers, paralegals, or law clerks and 63 percent also believe generative AI will change the way law is taught and studied.

Mike Walsh, CEO, LexisNexis Legal & Professional

Mike Walsh, CEO, LexisNexis Legal & Professional

Mike Walsh, CEO of LexisNexis Legal & Professional, part of RELX, began thinking about how generative AI might transform the profession long before the advent of ChatGPT. LexisNexis was aware that AI-based technologies had tremendous potential by using it for many years, and had started to study how to draw on its vast repository of legal information to develop a reliable proprietary language model, a specific type of generative AI model designed to process and generate language. Generative AI “makes all kinds of things possible,” he says.

A slow revolution until now

The first AI-linked tools have been around in the legal world for well over a decade. But so far many lawyers are cautious, however exciting they may find its potential. Greg Lambert, chief knowledge officer at Jackson Walker LLP, a Texas-based firm with almost 500 attorneys, recalls being at a conference with in-house counsel from an array of companies. In the hallways, during coffee breaks and over lunch, the buzz was all about the latest AI breakthroughs, and what it might do for (and to) the legal profession, he says, adding: “There’s an expectation that this will change everything.”

But even if his peers believe that generative AI will make legal services “better, faster, cheaper” to deliver, Lambert says they are not envisaging that “someone will just go flip a switch and use generative AI tools and suddenly bills will be cut in half.” It’s going to take time and experimentation to understand the full horizon of possibilities, he argues.

Greg Lambert, Chief Knowledge Services Officer, Jackson Walker LLP

Greg Lambert, Chief Knowledge Services Officer, Jackson Walker LLP

Danielle Benecke, Founder & Global Head, Baker McKenzie Machine Learning

Danielle Benecke, Founder & Global Head, Baker McKenzie Machine Learning

To varying degrees, that experimentation is already underway, with giant global partnerships moving rapidly up the learning curve. Just over a year before the release of ChatGPT, Danielle Benecke was named founder and head of Baker McKenzie Machine Learning, an entirely new kind of role. “We work on questions of how to combine machine learning and other types of AI with our expertise to create new services,” Benecke explains. Her team studies how to apply generative AI and machine learning to the strategic decision-making process. The ultimate goal? “To figure out what the high-value service of law firms of the future looks like,” Benecke says.

Baker McKenzie has examined critical tasks that are time-consuming for lawyers to tackle using traditional research tools. For instance, a perennial issue for Baker McKenzie’s clients is understanding global trade sanctions and identifying related risks. So Benecke’s team undertook a pilot study to figure out how generative AI and data science might help them better advise those clients. “We looked at client supply chains that were thought to be vulnerable to sanctions and other trade restrictions and used data provided by the client and from public sources to identify risks – at scale and rapidly,” she says. “When you do that at scale, you discover things that humans on their own might not recognize.”

It’s this kind of potential that lies behind studies by the likes of Goldman Sachs, which highlights opportunities for evolution and efficiency. Even if, in its infancy, the technology sometimes suffers from teething problems.

Dangers of machine hallucinations

One of those teething problems can give considerable pause for thought: AI has an unfortunate habit of making stuff up.

“Experimenting with these models is both terrifying and fun,” says Ashley B. Armstrong, assistant clinical professor of law at the University of Connecticut School of Law, who has been researching ChatGPT and generative AI in the legal writing context. “When I asked research-related questions, ChatGPT spit back something that sounds very intelligent [and] provided a conglomeration of citations that look real but don’t actually exist.” The more obscure the topic – say, Connecticut’s land-use statute – the more likely that ChatGPT was to “hallucinate,” Armstrong says. The model can produce wildly inaccurate information on which users rely at their peril.

Ashley Armstrong, Assistant Clinical Professor of Law, University of Connecticut

Ashley Armstrong, Assistant Clinical Professor of Law, University of Connecticut

Jamie Buckley, Chief Product Officer, LexisNexis Legal & Professional

Jamie Buckley, Chief Product Officer, LexisNexis Legal & Professional

Experiences like that underscore the need for reliable tools customized to meet lawyers’ very specific requirements. Open-source models that draw widely on the mass of information available to all worldwide can be wrong – and worse still, they can be wrong with remarkable aplomb and confidence. For Jamie Buckley, however, that can be mitigated with the right kind of generative AI model.

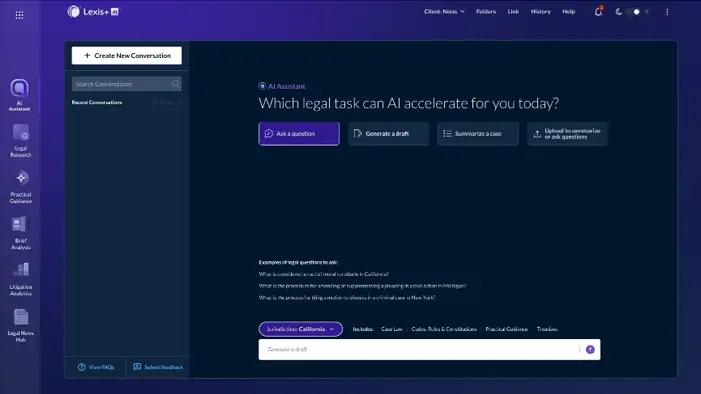

Buckley is chief product officer at LexisNexis Legal & Professional and has been overseeing the incorporation of AI and machine learning into products like Lexis+ for years. “We’re not building a general model that has to answer every question that users can imagine,” he says.

Given that a targeted large language model needs to be trained on the widest possible universe of strictly relevant information, LexisNexis’s 144bn legal and news documents and records that aren’t publicly accessible on the internet are a huge help. That extensive knowledge base means lawyers can easily delve into the latest legal decisions across multiple jurisdictions, for example, even without the resources of larger firms. In Buckley’s view, these technologies can eliminate much of the “drudge work” associated with legal research, document drafting, and other central tasks, and allow lawyers to focus on adding what only they can: judgment, context and understanding.

Overcoming copyright and IP concerns

Copyright concerns around AI training data and information databases remain controversial and there have been instances of companies accused by others of stealing their proprietary data.

While open-source models like ChatGPT draw on whatever users submit and on publicly-available information, lawyers have a professional duty of confidentiality to their clients, meaning that LexisNexis must ensure that search terms or briefs, for example, are not shared or become identifiable.

“The number one rule since the birth of the internet is ‘don’t do anything stupid’ online,” says Lambert of Jackson Walker. “You need to think ahead of time: are you and your client comfortable?” In the case of LexisNexis, with which many law firms have a trusted relationship, “there’s a high level of confidence that if we type something into Lexis+, my opposing counsel won’t get insight into my legal strategy or argument.” Similarly, he says, lawyers will likely have more confidence in the provenance of the data on which the model has been trained.

AI will augment lawyers’ work, not replace it

“I do not see AI replacing an attorney,” says Joel Murray, an attorney with the law firm of McKean Smith of Portland, Oregon and Vancouver, Washington. He adds, “Clients hire an attorney for the attorney’s knowledge, experience, and ability to interpret and apply legal precedent. While AI may be able to identify relevant statutes, regulations, and case law, there is a human aspect of law practice that provides guidance to clients that AI is not likely to replace. If properly developed, AI can be another means by which attorneys can increase their productivity, and obtain optimal results for clients”. Moreover, notes Armstrong, generative AI – which predicts text based on the existing information that it is trained on – simply can’t replace human lawyers when it comes to taking the law in new directions—at least for now. “Reaffirming the status quo is not going to help move the needle,” she says, pointing to the importance of arguing for fresh interpretations of established laws to improve systems and combat injustice. Generative AI at this moment in time, she adds, can’t replace innovative thought or human creativity.

It’s tough to get lawyers – like members of most other professions – to embrace radically new ways of working. But most agree that ultimately, generative AI tools will prove transformative, however difficult it is to predict how – just as we couldn’t begin to imagine the full impact of the internet and social media on our lives back in the 1990s.

Joel F. Murray, Attorney, McKean Smith LLC

Joel F. Murray, Attorney, McKean Smith LLC

AI won’t replace lawyers, but lawyers who use AI will replace lawyers who don’t

“The technology is moving so quickly, it’s so new that all of us in the industry - even those who are AI experts - are trying to figure it out,” says Benecke, laughing. Her own instinct? The lawyer of the future in firms such as Baker McKenzie will focus on using technology to augment human expertise that can’t be replicated in even the most sophisticated AI models. "We call this machine learning-enabled judgment, and it includes the ability to contextualize the law - to tell the client what to do - in the full circumstances of their business, the market and society."

For his part, Mike Walsh places absolute confidence on a single fact. “Lawyers are very, very smart people who, throughout history, have figured out how to add more value, generate more business” and generate better advice about legal strategies (such as when it makes economic sense to settle a case.) “Today, the tools still aren’t sufficient to enable all lawyers to do that for their clients.” But he says that won’t remain the case for long and the nature of legal work is about be transformed.

Lambert agrees with Walsh’s analysis and takes it a step further. “There’s a catchphrase making the rounds right now,” he says. “AI won’t replace lawyers, but lawyers who use AI will replace lawyers who don’t.”

RELX Among 10 Best AI Stock Companies

Recognized for having AI and automation as a central part of businesses

Generative AI & the Legal Profession

More than 4,000 lawyers, law students and consumers surveyed

Become a Lexis+ AI Insider

Lexis+ AI is here. Be the first to access it and transform your legal work. Join here.